|

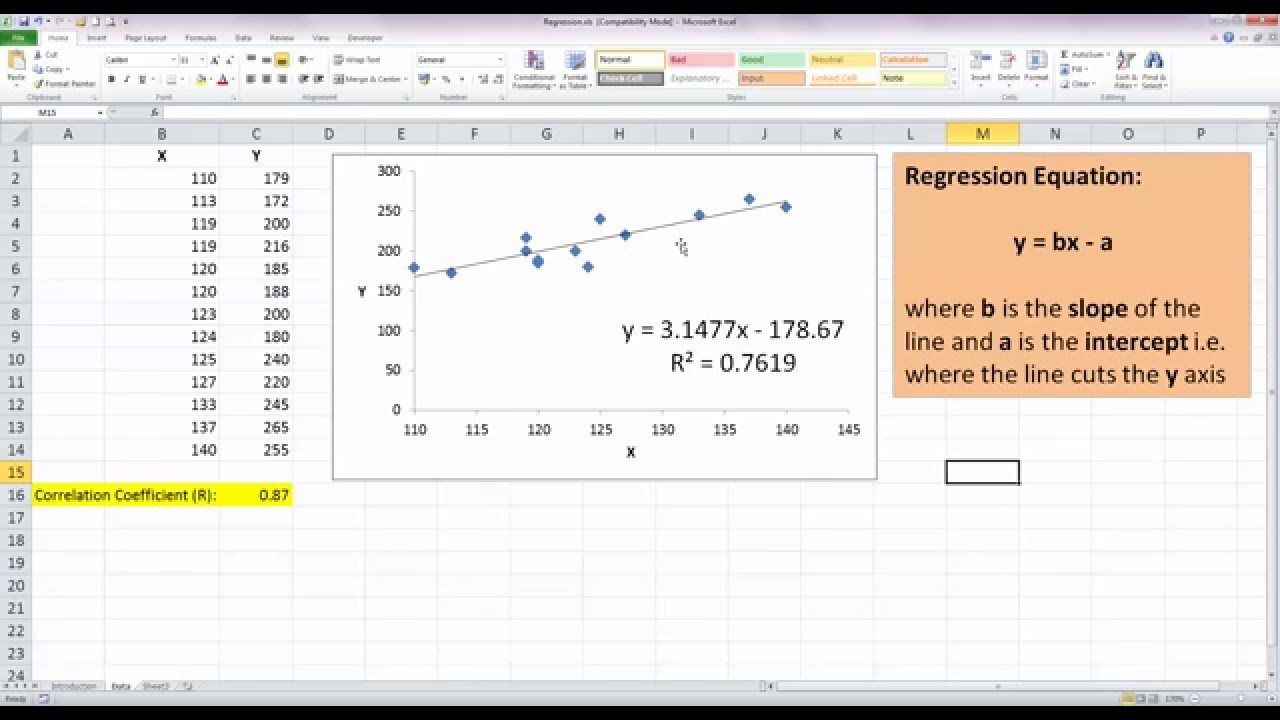

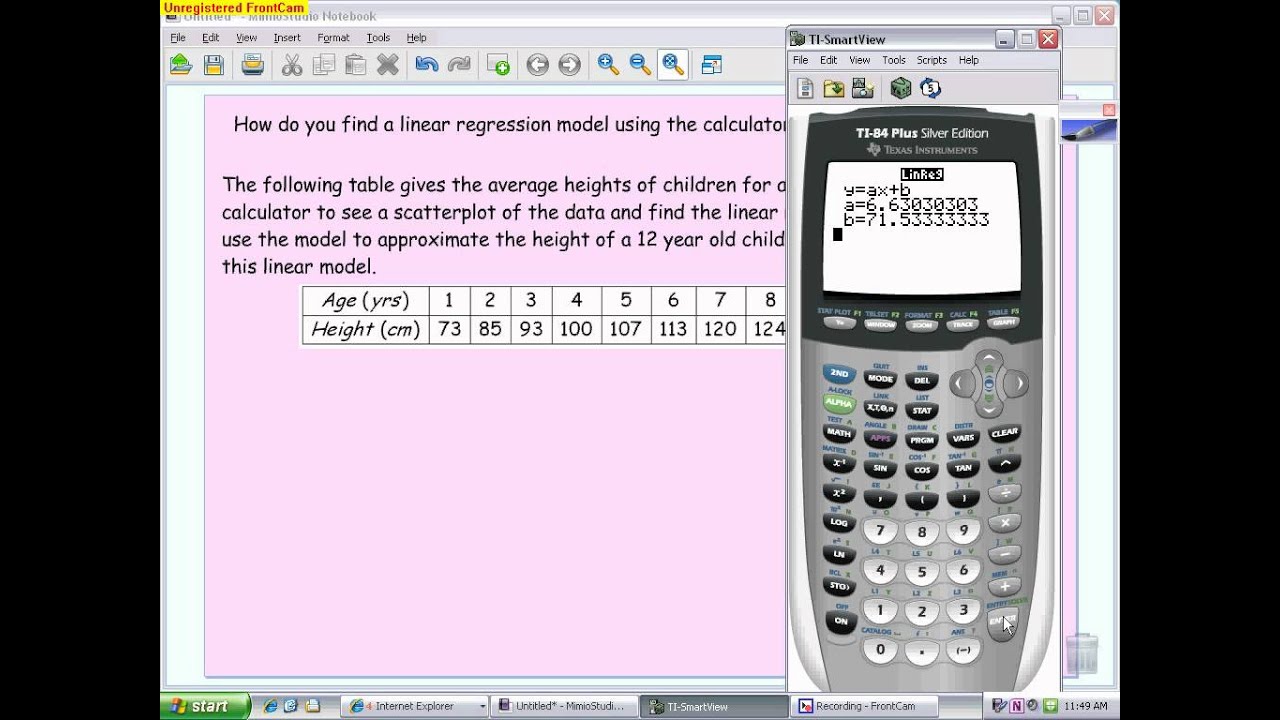

Let's assume the same scenario as the insurance company in the topic Covariance and Correlation and R-Squared.Īfter we have generated Metric 6: Pearson Correlation (r) defined in the above topic, you can immediately calculate metrics for β, α and our linear estimate hi. The above metrics enable us to solve for our linear regression equation h: Scenario The result yields the following two equalities for β and α: The standard deviation of the dependent variable s y.The standard deviation of the explanatory variable s x.The mean of the dependent variable ( Y?).The mean of the explanatory variable ( X?).The following five summary statistics support the calculations for the least squares approach: The least squares approach attempts to minimize the sum of the square of the above error terms ( ε12+.+εn2). The actual difference between the linear model above and the actual dependent yi value can be represented by an error term ( εi): The above model attempts to measure the estimated value. This simple linear regression equation is sometimes referred to as a "line of best fit." Least Squares Approach This estimate is denoted as hi and is dependent upon only xi, β, and α with the following linear relationship: For each explanatory value xi, this simple model generates an estimate value for yi. For tutorial purposes, this simple linear regression attempts to model the relationship between a dependent variable ( y) and a single explanatory variable ( x) using a regression coefficient ( β) and a constant ( α) in a linear equation. Linear Regressionįull regression analysis is used to define a relationship between a dependent variable ( y) and explanatory variables ( X1. The MAQL calculation requires use of Pearson Correlation (r), which is described in Covariance and Correlation and R-Squared. To learn about statistical functions in MAQL, see our Documentation. You can extend these metrics to deliver analyses such as trending, forecasting, risk exposure, and other types of predictive reporting. Points that are not clustered near or on the line of best fit.This article introduces the metrics for assembling simple linear regression lines and the underlying constants, using the least squares method. Weak positve and negative correlations have data.

Points very close to the line of best fit. Strong positve and negative correlations have data.The line of best that falls down quickly from left to the right is.The line of best that rises quickly from left to right is called a.Line of best fit (trend line) - A line on a scatter plot which can be drawn near the points to more clearly show Where the summations are again taken over the entire data set

Given any set of n data points in the form (`x_i`, `y_i`),Īccording to this method of minimizing the sum of square errors, the line of best fit is obtained when In this particular equation, the constant m determines the slope or gradient of that line, and the constant term "b" determines the point at which the line crosses the y-axis, The origin of the name "e linear"e comes from the fact that the set of solutions of such an equation forms a straight line in the plane. Simple linear regression is a way to describe a relationship between two variables through an equation of a straight line,Ĭalled line of best fit, that most closely models this relationship.Ī common form of a linear equation in the two variables x and y is

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed